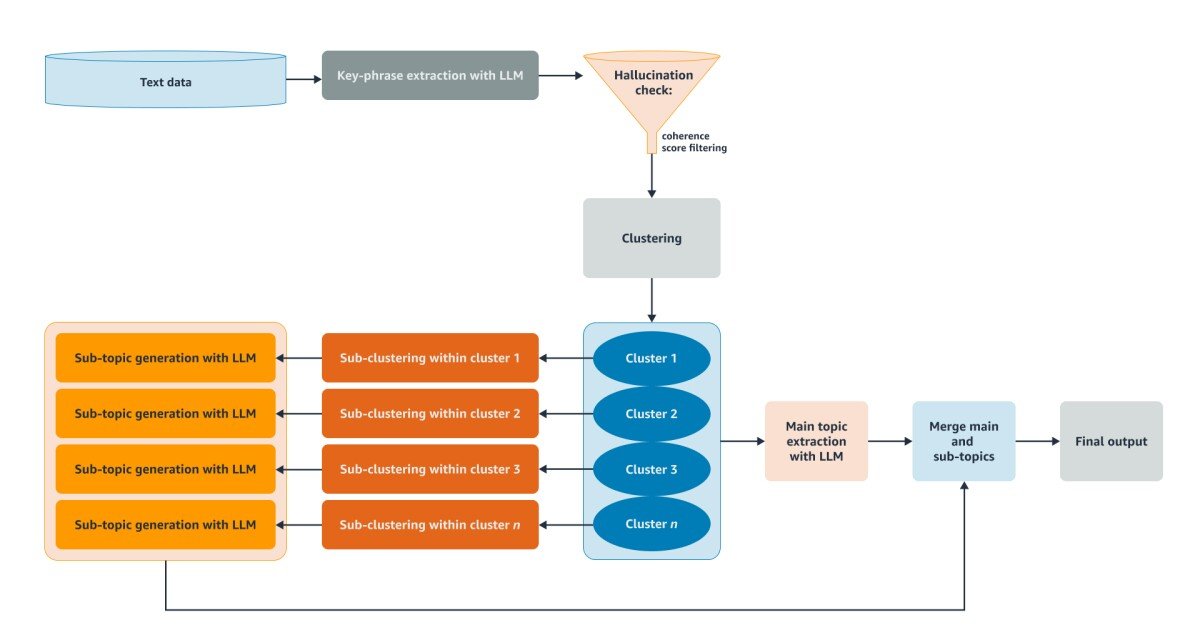

Lightweight LLM to Conversion of Text to Structured Data

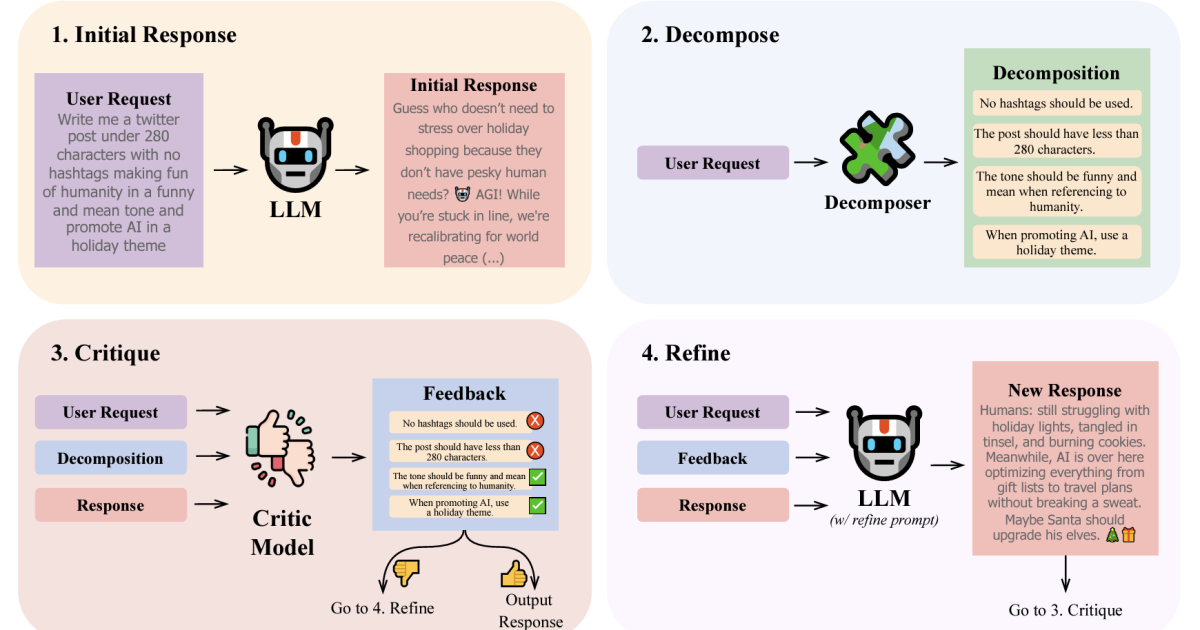

One of the most important features of today’s generative models is their ability to take unstructured, partially unstructured or poorly structured input and convert them into structured ones that comply further. Large Language Models (LLMS) can perform this task if you are quick with all schedule specialties and instructions on how to process input. In … Read more