Arithmetic with low precision makes robotic location more effective

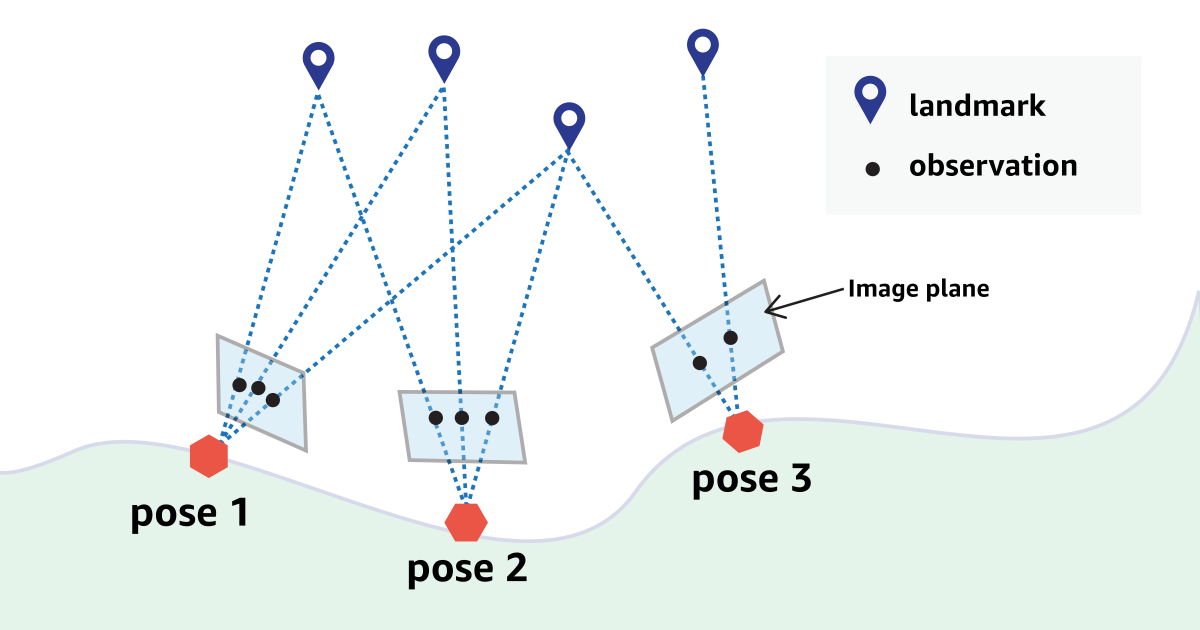

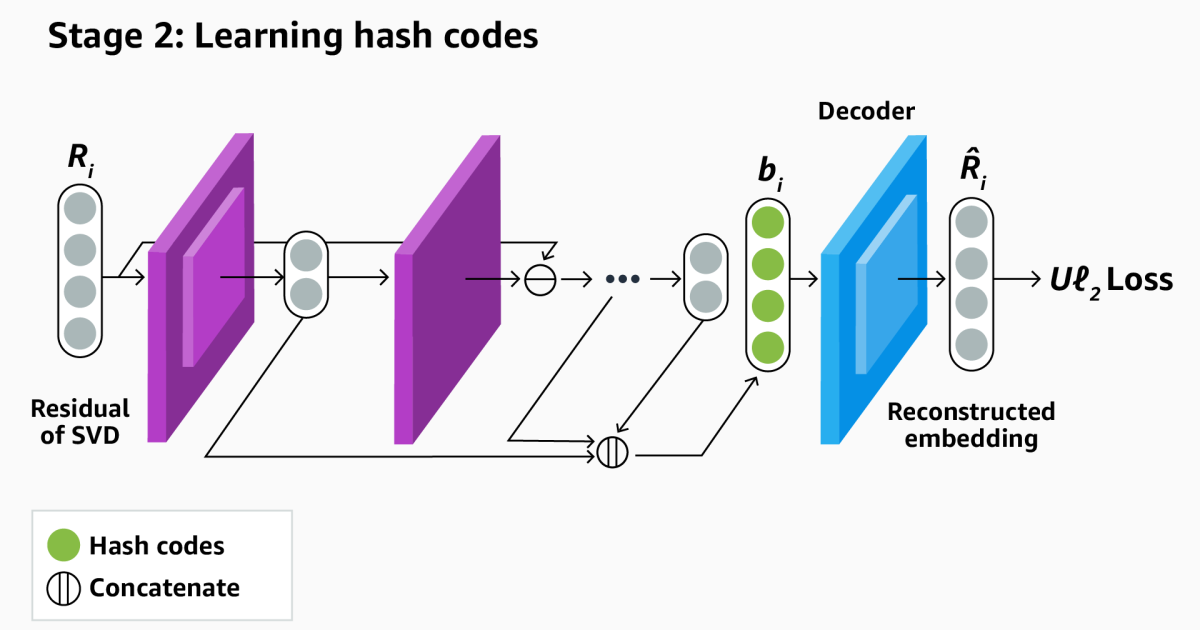

At the same time, location and mapping (sludge) are the core technology of autonomous mobile robots. At the same time, it involves building a map of the robot’s environment and finding the robot’s location within this map. Sludge is calculated intensive and implements it on resource-limited robots-as consumers’ household robots-generally technical requirements to make calculations … Read more