Modern warehouses rely on complex networks of sensors to enable safe and effective operations. These sensors must detect everything from packages and containers to robots and vehicles, often in changing environments with different lighting conditions. More important for Amazon, we need to be able to detect barcodes in an effective way.

Team Amazon Robotics ID (ARID) focuses on solving this problem. When we first started working on it, we faced a significant bottleneck: Optimization of sensor location required week or months of physical prototype and reality tests, which severely limits our ability to explore innovative solutions.

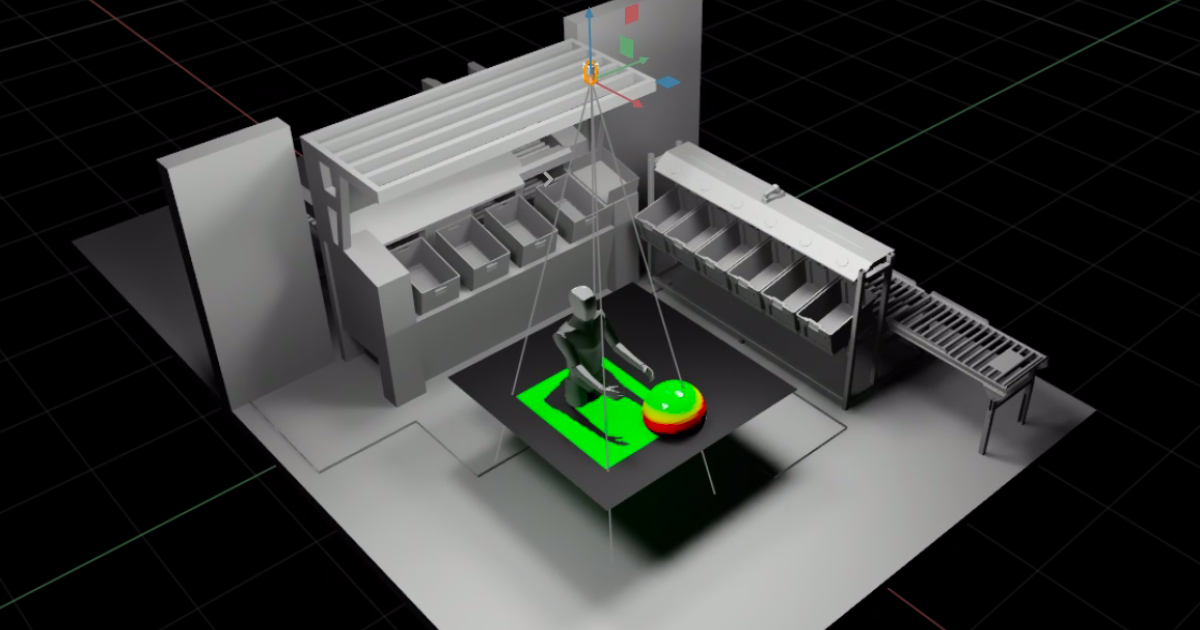

To transform this process, we developed Sensor Workbench (SWB), a sensor simulation platform built on Nvidia’s Isaac SIM, parallel treatment, physics-based sensor modeling and 3-D with high faith. By providing virtual testing around these mirror conditions in the real world with apredenter accuracy, SWB allows our teams to explore the hunger of configurations at the same time as it used to take to test only a few physical setups.

Camera and target selection/positioning

Sensor Workbench users can choose different cameras and dimensions and place them in 3D rooms to receive real-time feedback on barcode codicability.

Three Key Innovations activated SWB: A specialized parallel-computing architecture, as performance simulation tasks across GPU; A Custom Cad-to-Optusd (Universal Scene Description) Pipeline; And the use of Openusd as grinding truth through love The Simulation Process.

Parallel-Computing Architecture

Our parll-processing pipeline utilizes Nvidia’s Warp Library with custom calculus core to maximize GPU utilization. By keeping 3D objects sustained in GPU memory and updating transformations only when objects move, we are Elimina reduing data transfers. We also only perform calculations when needed – when, for example, a sensor parameter changes or something moves. In these ways we achieve real -time performance.

Methods Visualization

Sensor Workbench users can choose the sphere or plan-based visualizations to see how the positions and rotations of individual barcodes affect performance.

This architecture allows us to perform complex calculations for multiple sensors at the same time, enabling immediate feedback in the form of immersive 3D visuals. These visuals take measurements that barcode detection machine learning models must work as teams adjust sensor positions and parameters in the environment.

CAD to USD

Our other innovation involved the development of a custom CAD-to-Opt-OpenusD pipeline that automatically converts detailed warehouse models into optimized 3-D assets. Our CAD -to -USD conversion pipeline repeats the structure and content of models created in the SolidWorks modeling program with a mapping of 1: 1. We start by extracting important data -including world transformers, mesh geometry, material properties and common information -from the frame. The full collection-and-part hierarchy is preserved so that the resulting USD internship space mirrors the frame structure exactly.

To ensure modularity and maintenance, we organize the data in separate USD layers covering mesh, materials, joints and transformations. This layered approach ensures that the converted USD file FAIS faithfully preserves the asset structure, geometry and visual belief in the original craft model that enables accurate and scalable integration to real-time visualization, simulation and collaboration.

Openusd as Earth Truth

The third important factor was our new approach to using Openusd as the grounded truth throughout the simulation process. We developed custom schedules that extended beyond basic 3-D-AT INFORMATION to include enriched about descriptions and simulation parameters. Our system continues to detect all stage activity – from sensor positions and orientations to object movements and parameter changes – directly to the USD practice space in real time. We even hold user interface elements and their states within the USD, which allows us to restore not only the simulation configuration but also the complete user -boundary mode.

This architecture ensures that when USD initial configurations change, the simulation automatically adjusts without requiring changes to the core software. By htaving this living synchronization between the state of simulation and the USD representation, we create a reliable source of truth that captures the complete state of the simulation environment, allowing users to store and restore simulation configurations exactly as necessary. They simply reflect boundary victims the state of the world and create a flexible and maintainable system that can develop with our needs.

Application

With SWBs, our teams can now quickly evaluate sensor mounting positions and verify overall concepts in a fraction of the previously required time. More important is that SWB has become a strong platform for interdunctional collaboration that gives engineers, researchers and operational teams the opportunity to work together in real time, visualize and adjust sensor configurations while immediately seeing the impact of their changes and sharing their results with each other.

New views

In fashion projection, there is no need for an explicit goal. Instead, the Sensor Workbench uses the entire environment as a goal that projects rays from the camera to identify locations for barcode location. Users can also switch between an understanding of three -quarters and the perspectives of individual cameras.

Due to the original success of simulating barcode-raading scenarios, we have expanded SWB’s ability to incorporate high credibility simulations. This allows teams to iter on new baffle and lighting designs, which further optimizes the conditions for reliable barcoding, while ensuring that lighting conditions are also safe for human eyes. Teams can now explore different lighting conditions, target positions and sensor configurations while providing insight that would take months to gather through traditional testing methods.

When we look ahead, we are working on several exciting improvement of the system. Our current focus is on integrating more advanced sensor simulations that combine analytical models with the real measurement of measurement from the dry team, includes further the accuracy of the system and practical usefulness. We are also investigating the use of AI to suggest optimal sensor locations for new station designs that can potentially identify new configurations that users of the tool may not be considering.

In addition, we seem to expand the system to act as an understandable synthetic data generation platform. This will go beyond just simulating barcoding scenarios, providing a complete digital environment for testing sensors and algorithms. This capacity will allow teams to validate and train their system uses different, automatically generated data sets that catch the full range of conditions they had to encourage in the real world operations.

By combining advanced scientific computing with practical industrial applications, SWB representations are a significant step forward in warehouse automation development. The platform shows how sophisticated simulation tools can dramatically speed up innovation in complex industrial systems. As we continue to improve the system of new capabilities, we are excited about its potential to further transform and set new standards for warehouse automation.