Deep learning models are data-driven and this data may contain sensitive information that requires privacy protection. Differential Privacy (DP) is a formal framework for eradicating the privacy of individuals in data sets so that opponents of users cannot learn a given data tray was or was not used to train a machine learning model. Use of DP in deep learning typically means to limit the contribution that each training sample makes to the model’s parameter adjustments, an approach called mowing per day. Sample.

However, per-test-gradient clipping makes deep learning much more time-consuming than it would otherwise be, which prevents the development of large DP models to the occurrence at the level of GPT language models with billions of parameters.

In 2022, in workshops at International Conference on Machine Learning (ICML) and the conference on neural information processing systems (Neurips), we presented two papers that promote DP for deep learning. In the first paper, “Automatic clipping: Differentialily Private Deep Learning made easier and stronger,” we described an automatic method that improves the effectiveness of setting the gradient clipping process with an order of magnitude (says 5-10 times).

Typically, gradient clipping involves an expensive ablation examination to choose a clip threshold over which a Data Røve’s contribution to the model’s parameter adjustments is cut off or cut. Our approach uses normalization instead, eliminating the setting of the cliff threshold.

In the second paper, “Differentially private bias-term only fine-tuning of foundation models” (DP-BitFit), which won the best paper price at the Neurips workshop on reliable and socially responsible machine learning (TSRML), we introduced Bitfit, a parameter fine-tuning during DP learning.

Generally, a neural network has two types of parameters: the weights that make up more than 99% of the parameters and catch most of the information from the training data, and the bias that switches (displaces) model output. We show that private fine-tuning of the bias conditions alone is enough to achieve high accident during DP restrictions, make DP learning 2 to 30 times faster, reduce memory use by 50% to 88% and only curled 1/1000 communication costs in the distributed environment.

Together, these two techniques have done fine tuning a DP-GPT-2 as effective as fine tuning a standard GPT-2 in a parameter effect way. We have made both methods publicly available to encourage researchers to experience and take advantage of faster DP Deep Learning.

Automatic mowing

The included dyblæring process has Tuvable Hyperparameter called the learning speed, which determines the extent to which the model weights can be changed during updates. The per. Sample-gradient clip threshold is the same, but it imposed on a limit for has per. Trial base. The existing approach to DP training requires an ablation examination to simultaneously set the cliff threshold and learning speed. As such, if K (Say, five, in practice) Different cliff thresholds evaluated, this makes the model’s hyperparameter -tuning stage K Times more expensive.

To solve this problem, we automatically introduced mowing using gradient normalization Intead of PR. Testing gradient clipping. This (1) eliminates the clip threshold, (2) enlarges the small gradients not cut and (3) optimizes the proof. Equipped with our automatic clipping, DP-Stochastic-Gradient Discharge Optimization algorithm (DP-SGD) has the same asymptotic convergency speed as standard (non-DP) SGD, even in the non-convex optimization setting where the deep learning optimization lines.

Our experiences across multiple computer vision and language tasks show that automatic mowing can achieve advanced DP accuracy, with PR. PR.

DP-BitFit

The first advantage of differentiated private bias-term fine-tuning (dp-bitfit) is that it is agnostic model; We can apply it to any model by simply freezing all weights during fine tuning and only updating the bias conditions. In sharp contrast, prior alternatives such as low rank adapped (LORA) and adapters apply exclusively to transformers and involve extra setting of adapption rows.

The second advantage of DP-BitFit is its parameter efficiency. In a study that spans a number of foundation models, we found that Bias -Tarms made up only wood 0.1% of model parameters. This means that DP-Bitfit provides great efficiency improvisers in terms of exercise time, memory imprints and communication costs in the distributed learning setting.

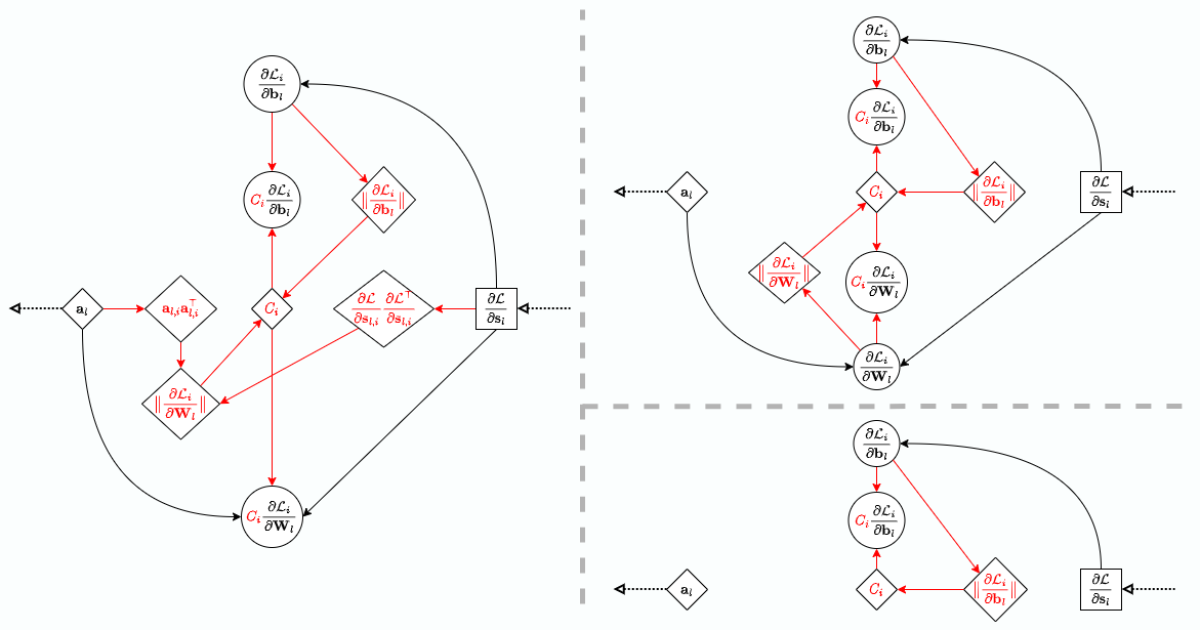

The third advantage of DP-BitFit is its calculation advantage over other parameters effect methods, such as DP-Lora. Even if both are approaching fine-tuned about the same number of parameters, DP-Bitfit still enjoys a major advantage in memory savings as it does not have to save and access exensive activation details when calculating the bias degrees; It is inaccessible when calculating weight gradients. We verify this carefully through the chain rules for the rear propagation, where DP-Bitfit has a much simpler computer graph because activation cleaners are not used.

Empirically, we have observed a significant boost in effectively when switching from dp full tuning to dp-bitfit, while we are still kef-to-art accuracy on large foundation models such as GPT-2-WORM, reset 152, Roberta-Large and Vision Transformers. For example, we compare DP-BitFit with DP full fine tuning and observe a four to ten times speedup and one two to ten times memory saving on GPT-2.

Recognitions: We would like to acknowledge our Coauthors on the papers for their contribution: Sheng Zha and George Karypis. We thank Huzefa Rangwala for reviewing this post.